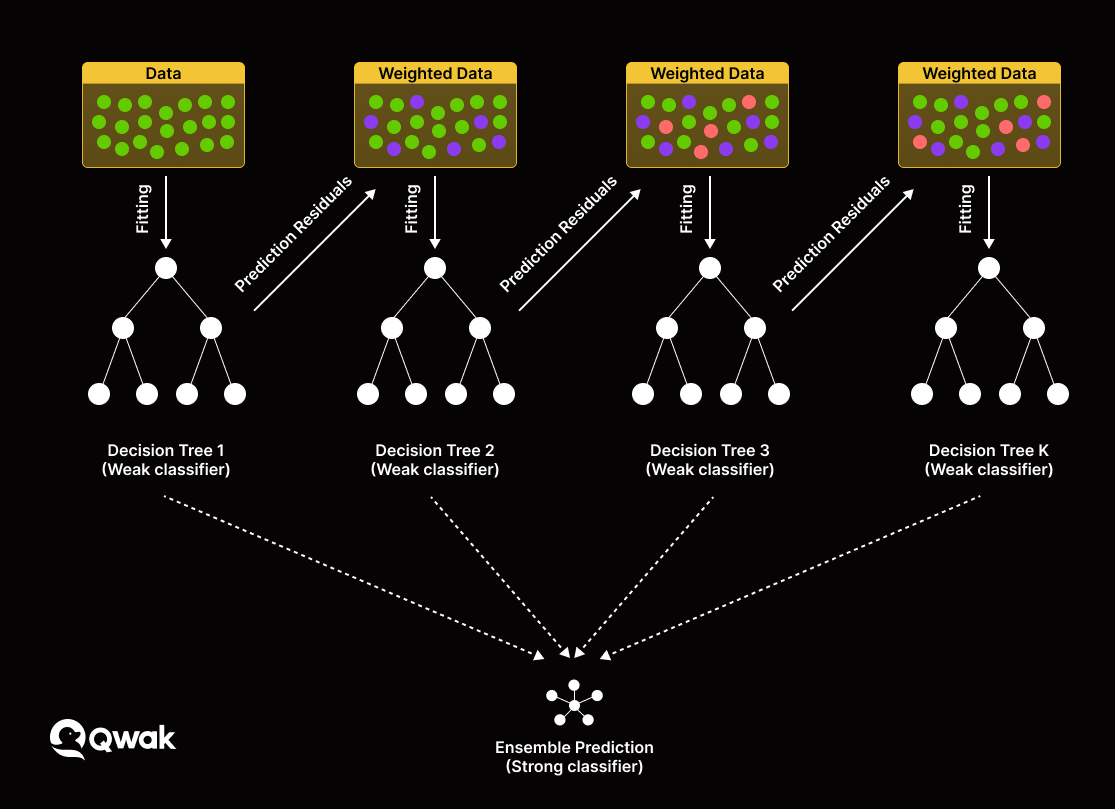

Xgboost vs random forest12/31/2023  The cookie is used to store the user consent for the cookies in the category "Analytics". This cookie is set by GDPR Cookie Consent plugin. The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Advertisement". These cookies ensure basic functionalities and security features of the website, anonymously. Necessary cookies are absolutely essential for the website to function properly. If you want to see what I’m up to via email, you can consider signing up to my newsletter. This was part of the Beginner Data Science series so if you enjoyed this article, you can check out my other videos on YouTube. Hopefully, this post can clarify some of the differences between these algorithms. Gradient boosting is really popular nowadays thanks to their efficiency and performance. Overall, gradient boosting usually performs better than random forests but they’re prone to overfitting to avoid this, we need to remember to tune the parameters carefully. Gradient boosting doesn’t do this and instead aggregates the results of each decision tree along the way to calculate the final result. In random forests, the results of decision trees are aggregated at the end of the process. This is in contrast to random forests which build and calculate each decision tree independently.Īnother key difference between random forests and gradient boosting is how they aggregate their results. The main point is that each tree is added each time to improve the overall model. If that doesn’t make any sense, then don’t worry about that for now. The gradient part of gradient boosting comes from minimising the gradient of the loss function as the algorithm builds each tree. Each new tree is built to improve on the deficiencies of the previous trees and this concept is called boosting. However, these trees are not being added without purpose. Unlike random forests, the decision trees in gradient boosting are built additively in other words, each decision tree is built one after another. The main difference between random forests and gradient boosting lies in how the decision trees are created and aggregated. In essence, gradient boosting is just an ensemble of weak predictors, which are usually decision trees. Popular algorithms like XGBoost and CatBoost are good examples of using the gradient boosting framework. Like random forests, we also have gradient boosting. This is where we introduce random forests. It isn’t ideal to have just a single decision tree as a general model to make predictions with In summary, decision trees aren’t really that useful by themselves despite being easy to build. If we created our decision tree with a different question in the beginning, the order of the questions in the tree could look very different. It would’ve been easy to overfit this decision tree by adding a few more questions, making the tree too specific for me and thus not generalisable for other people.Īlso, decision trees can look very different depending on the question that it starts with. In our example, the further down the tree we went, the more specific the tree was for my scenario of deciding to buy a new phone. This is when the model (in this case, a single decision tree) becomes so good at making predictions (decisions) for a particular dataset (just me) that it performs poorly on a different dataset (other people). However, this simplicity comes with some serious disadvantages. They’re also very easy to build computationally. In contrast, we can also remove questions from a tree (called “pruning”) to make it simpler.Īs shown in the examples above, decision trees are great for providing a clear visual for making decisions.

You can see that if we really wanted to, we can keep adding questions to the tree to increase its complexity. My answer to that is yes, so the final decision would be to buy a new phone. So again, we follow the path of “No” and go to the final question: Do I have enough disposable income to buy a new phone? We’ve already answered the first question as “Yes” so we can move down to the next question: Do I still have enough memory capacity in my phone for videos and photos?

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed